Some thoughts about Windows Memory Management

Malware analysis and digital forensic analysis are processes that often needs the analyst to look into system memory.

In this regard, a good analyst must have at least a base knowledge of Windows Memory Management.

In this post I will try to explain mechanisms used to map executable modules into the address space and the most exploitation techniques.

Some fundamentals

Just some basic information, necessary to understand the remainder of this article

The paging

Paging is a memory management scheme by which a computer stores and retrieves data from secondary storage for use in main memory, used for to realize virtual address spaces and abstract from their physical memory.

The virtual memory is divided into equal sized pages and the physical memory into frames of the same size, and each page can be mapped to a frame.

Pages that are currently not mapped are temporarily stored on secondary storage (e.g. in a pagefile on the hard disk): when needed, the os retrieves the frame mapped to the physical page from the secondary storage.

The mapping between virtual and physical addresses normally is done transparently to the running applications by the memory management unit (MMU), which is a dedicated part of the CPU.

Under particular conditions, the hardware cannot be able to resolve the mapping without the help of the OS: in this case, a page fault error is raised and it will handled by the page fault handler of the OS.

For managing the paging mechanism, the Memory Management Unit use specifc data structures, the page tables: address spaces of different processes are isolated from each other so each process uses its own instances of these structures.

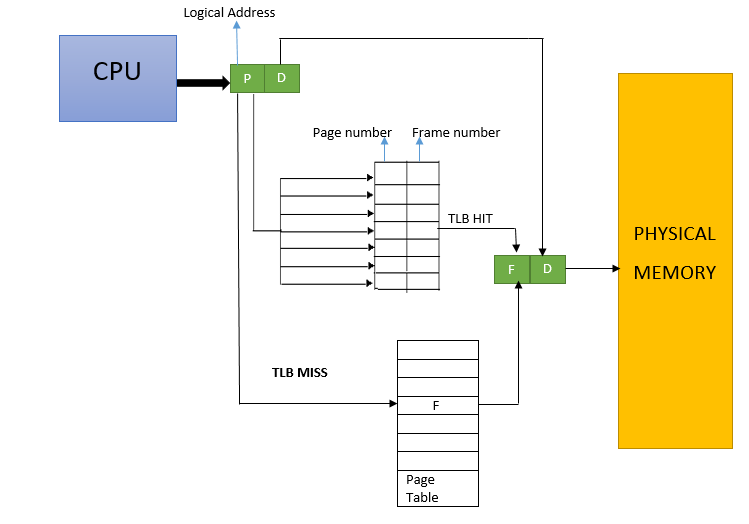

Each page table entry (PTE) describes the memory range of one associated page and in order to locate the PTE which is associated to a particular memory address, it is splitted into two parts: the upper bits are an index into the page table, and the lower bits are used as offset to the start address of the relating frame.

The TLB (Translation Lookaside Bu ffer)

A translation lookaside buffer is a memory cache used to reduce the time necessary to access a memory location.

Part of the MMU, the TLB stores the recent translations from virtual memory to physical memory and may reside between the CPU and the CPU cache, between CPU cache and the main memory or between the different levels of the multi-level cache.

Sometimes there are distinct TLBs for data and instruction addresses, the x86 architecture offers a DTLB for the former and an ITLB for the latter one.

Normally the DTLB and ITLB are synchronized, but there are cases in which they are enforced to become unsynchronized, for example to start packed applications or to realize memory cloaking, a technique wich use memory dependence prediction to speculatively identify dependent loads and stores it early in the pipeline.

The Window Memory management

Windows has both physical and virtual memory. Memory is managed in pages, with processes demanding it as necessary. Memory pages are 4KB in size (both for physical and virtual memory).

On 32-bit (x86) architectures, the total addressable memory is 4GB, divided equally into user space and system space.

Pages in system space can only be accessed from kernel mode; user-mode processes (application code) can only access data that is appropriately marked in user mode.

There is one single 2GB system space that is mapped into the address space for all processes; each process also has its own 2GB user space.

With a 64-bit architecture, the total address space is theoretically 16 exabyte (1018bytes, 1 million terabytes), but for a variety of software and hardware architectural reasons, 64-bit Windows only supports 16TB today, split equally between user and system space.

Virtual Memory

Within a process, virtual memory is broken into three categories:

- private virtual memory: that which is not shared, such as the process heap

- shareable: memory mapped files

- free: memory with an as yet undefined use.

Private and shareable memory can also be flagged in two ways: reserved (a thread has plans to use this range but it isn’t available yet), and committed (available to use).

Memory exploitation techniques

So, now let's look the most known memory protection technique, and how attackers can try to avoid them.

No eXecute

The most used technique is called No eXecute (NX), first introduced under with OpenBSD 3.3 in May 2003.

The aim of this technique is to enforce the distinction between data and code memory: initially there was no differentiation between code and data, so each byte in memory can either be used as code, if its address is loaded into the instruction pointer, or it can be used as data if it is accessed by a load or store operation.

An unwanted side effect of this is that attackers are able to conceal malicious code as harmless data and then later on, with the help of some vulnerability, execute it as code.

The NX protection is implemented by hardware on contemporary CPU but can be also simulated in software (usually named DEP - Data Execution Prevention on Windows systems).

On newer x86/x64 machines there is one dedicated PTE flag that controls if a certain page is executable or not.

If this flag is set, a page fault is invoked on the attempt to execute code from the corresponding page.

Therefore, besides the hardware, also the operating system has to implement this security feature as well.

In order to overcome the NX protection, attackers use different methods.

One very powerful way is to locate and execute useful instructions in one of the loaded system or application library modules: for example in Return Oriented Programming (ROP) attackers do not call complete library functions, but instead they use only small code chunks are already present in the machine's memory and chain those together to implement their desired functionality.

This instruction sequences are called "gadgets".

Each gadget typically ends in a return instruction and is located in a subroutine within the existing program and/or shared library code.

Chained together, these gadgets allow an attacker to perform arbitrary operations on a machine.

Address Space Layout Randomization

Address Space Layout Randomization (ASLR) is mechanism that makes all memory locations no longer fixed and predictable, but chosen randomly.

Consequently, the stack, the heap and the loaded modules will have different memory addresses each time a process is started making much harder for attackers to find usable memory locations, such as the beginning of a certain function or the effective location of malicious code or data.

Accordingly, when the memory layout is randomized and an exploit is performed that does not take this into account, the process will rather crash than being exploited.

One possible attack approach is to locate and utilize non-randomized memory, since early implementations of ASLR do not randomize the complete memory but only some parts of it.

Furthermore, Windows allows the disabling of ASLR on a per-module-base: so, even if all Windows libraries are ASLR-protected, a lot of custom libraries are not and constitute an easy exploitation target for attackers.

For example in the old CVE-2010-3971 the protection could be bypassed using the IE DLL mscorie.dll, that was distributed with ASLR disabled.